Key Steps in Machine Learning Model Deployment

Machine learning model deployment transforms trained models into real-world applications. This guide explores every key step—from model serialization and API development to containerization, orchestration, and continuous monitoring—ensuring your models deliver scalable, reliable, and consistent value in production environments.

Introduction to Machine Learning Deployment

Deploying machine learning (ML) models is the crucial phase that takes a model from experimentation to real-world production, where it can generate business value through actionable insights. Model deployment operationalizes AI, allowing predictions on new data to support decision-making, automation, and personalization across industries.

A well-executed ML deployment ensures scalability, reliability, and performance, bridging the gap between data science and production systems. In applications such as fraud detection, recommendation engines, and predictive maintenance, real-time inference capabilities are essential to deliver results with low latency and high accuracy.

Deployment strategies vary based on requirements from batch prediction, where models process large datasets periodically, to real-time inference, where predictions are delivered instantaneously. Modern ML deployment pipelines often use containerization (e.g., Docker) and orchestration (e.g., Kubernetes) to ensure consistent environments and automated scaling.

Finally, model deployment doesn’t end once the model goes live. Continuous monitoring, retraining, and optimization are vital to counteract issues like model drift, ensuring that predictive accuracy remains consistent as data evolves.

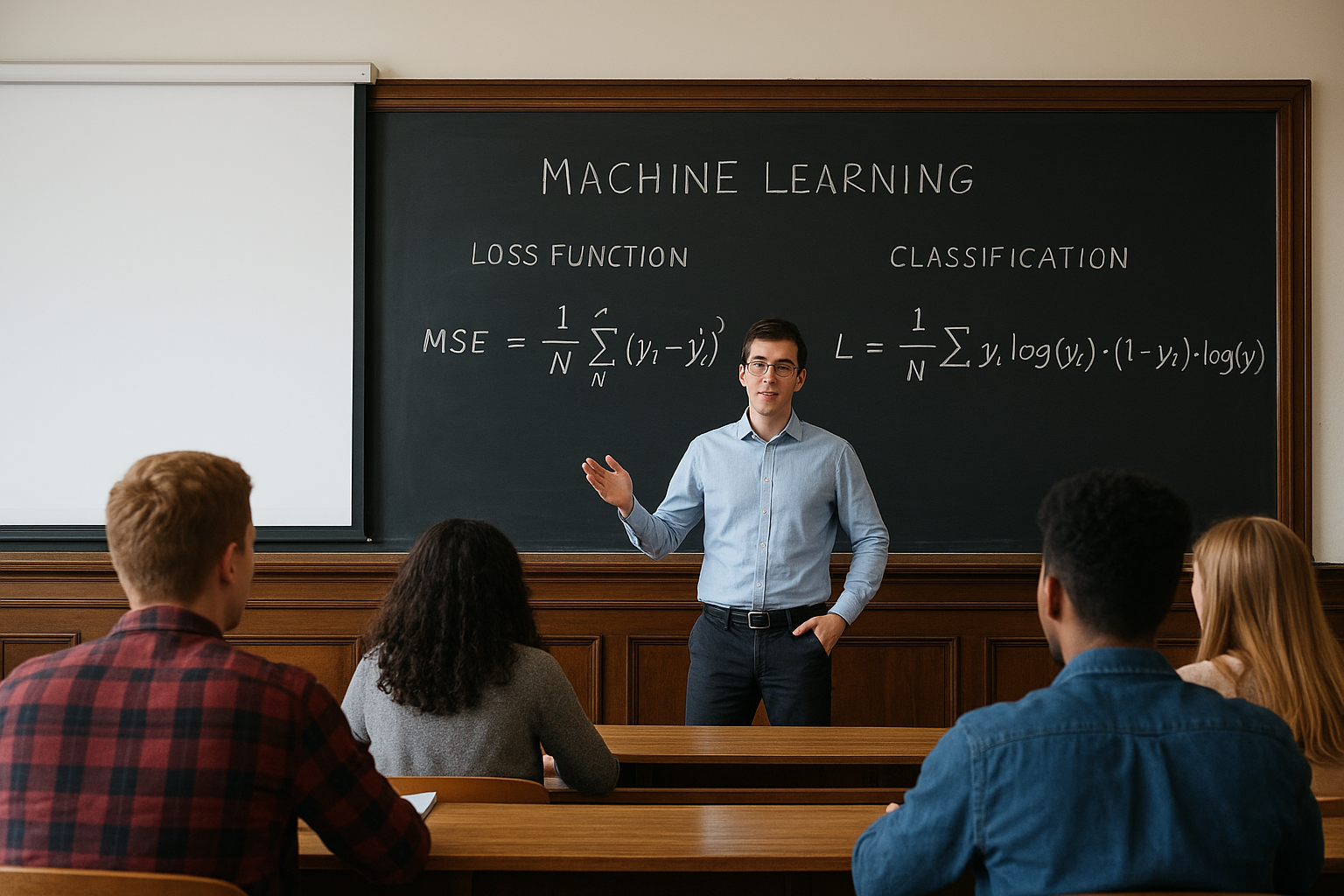

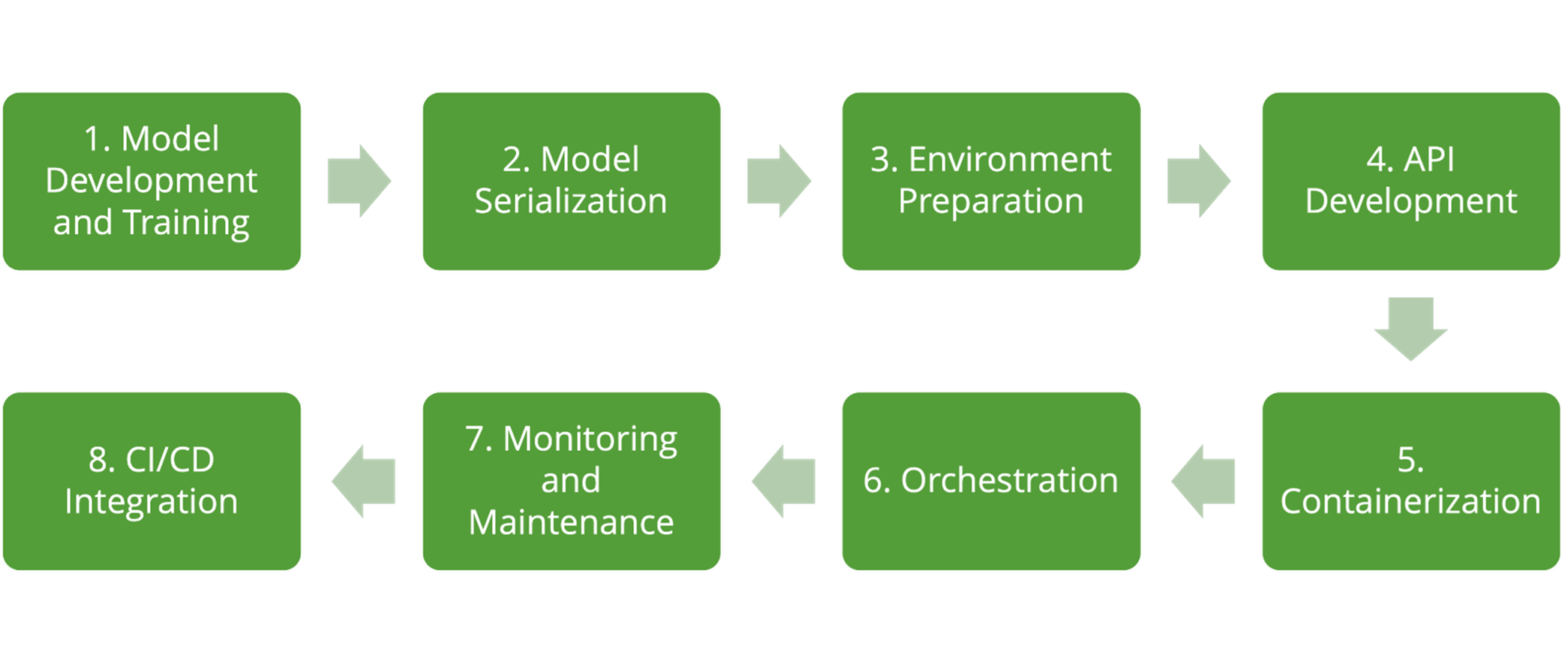

Critical Steps in Deploying an ML Model

Deploying ML models involves a structured, multi-phase process. Below are the essential steps that ensure a smooth, scalable, and secure deployment pipeline.

1. Model Development and Training

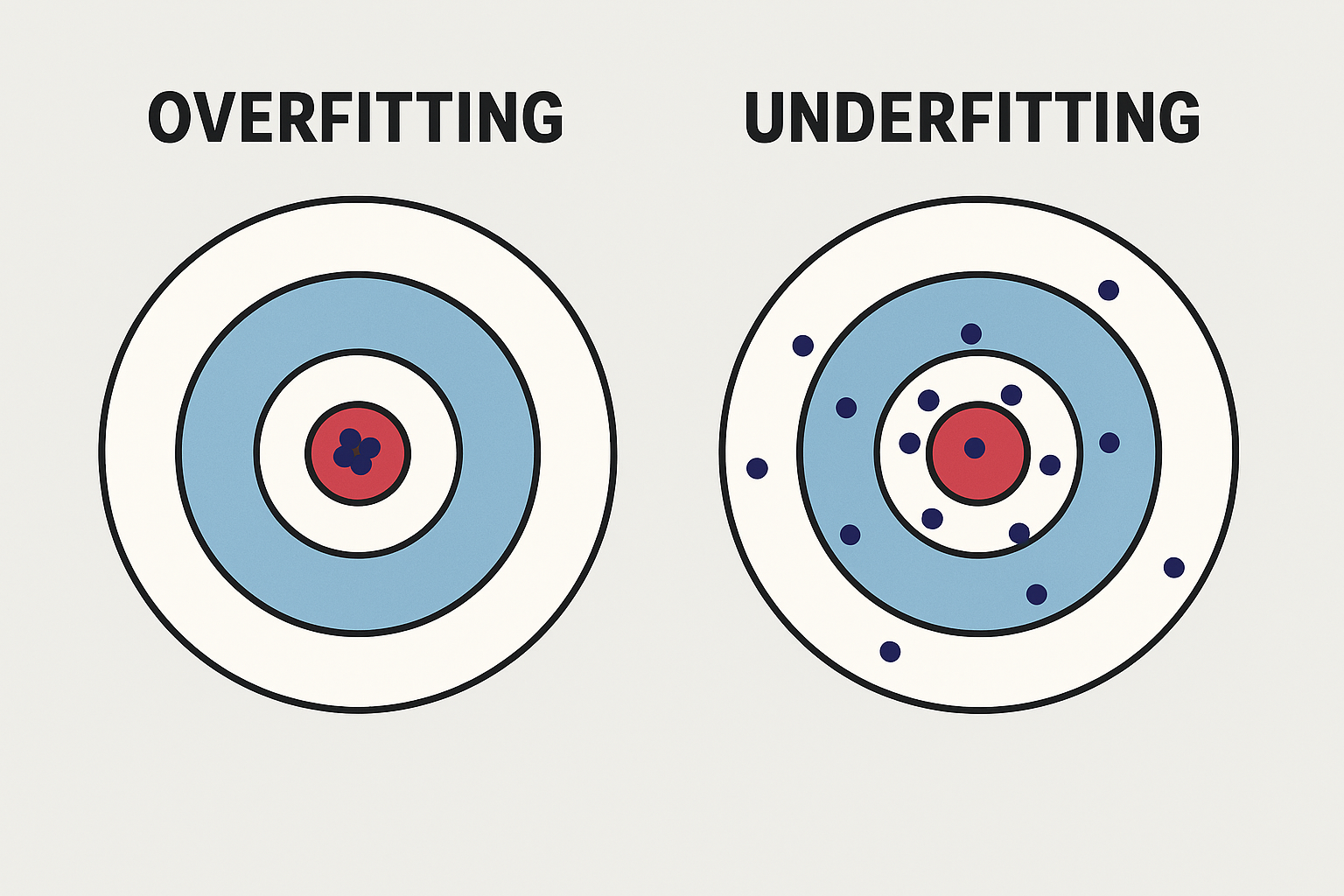

The process begins with model development, where data scientists select the right algorithms, preprocess data, and tune hyperparameters. Evaluation metrics such as accuracy, precision, recall, F1 score, or mean squared error assess performance depending on the task type. Rigorous testing ensures model generalizability and prevents overfitting, preparing it for production readiness.

2. Model Serialization

Once trained, the model must be serialized—converted into a format that can be efficiently saved, transferred, and reloaded. Tools like joblib, pickle, or framework-specific methods such as TensorFlow’s model.save() or PyTorch’s torch.save() make this possible. Standardized model formats like ONNX (Open Neural Network Exchange) are increasingly used to enable cross-platform compatibility and streamline deployment workflows.

3. Environment Preparation

Before deployment, it’s crucial to configure the production environment. This involves selecting a deployment platform, such as AWS SageMaker, Google AI Platform, Azure ML, or on-premise servers, and provisioning compute resources (CPU, GPU, or TPU). Matching the production setup with the development environment reduces dependency conflicts and performance discrepancies, ensuring consistent behavior.

4. API Development

To expose the model to end users or applications, an API (Application Programming Interface) is developed. Using frameworks like Flask, FastAPI, or Django, developers create endpoints—commonly /predict—to accept data inputs and return predictions. RESTful APIs or GraphQL APIs enable seamless integration with web apps, mobile apps, or backend systems, transforming the model into a real-time decision engine.

5. Containerization

Containerization using Docker packages the model, its dependencies, and environment configurations into a lightweight, portable unit. A Dockerfile defines how the image is built, ensuring version control, portability, and reproducibility across multiple environments. Containerized deployments improve scalability and simplify collaboration across data science, DevOps, and software engineering teams.

6. Orchestration

For enterprise-scale deployment, Kubernetes (K8s) is the industry standard for orchestrating containers. It automates tasks such as load balancing, scaling, failover recovery, and service discovery. Deployment manifests and Helm charts define how multiple containers interact, ensuring fault tolerance and optimized resource usage. Orchestration allows ML systems to dynamically adjust to traffic spikes and minimize downtime.

7. Monitoring and Maintenance

After deployment, monitoring is essential to ensure optimal performance and reliability. Metrics like latency, throughput, error rates, and resource utilization are continuously tracked using tools such as Prometheus or Grafana. Monitoring also includes model performance tracking to detect model drift or data quality issues. Retraining pipelines can be automated to update the model when performance degradation is detected.

8. CI/CD Integration

Integrating Continuous Integration and Continuous Deployment (CI/CD) pipelines automates testing, validation, and deployment workflows. Tools like GitHub Actions, Jenkins, or GitLab CI/CD ensure that new versions of the model or API are automatically tested and rolled out safely. CI/CD promotes MLOps (Machine Learning Operations) best practices, enabling rapid iteration, reproducibility, and efficient version control.

Conclusion

By following these structured steps, organizations can transform ML models from prototypes into production-ready assets. Successful deployment requires not only technical execution but also robust monitoring, automation, and governance to ensure sustainable long-term performance. In the age of MLOps, automated deployment pipelines and cloud-native tools are redefining how enterprises operationalize AI, ensuring continuous learning and real business impact.

Knowledge - Certification - Community