Mastering ETL in Data Engineering: Extract, Transform, Load Explained

ETL, or Extract, Transform, Load, is the foundation of data engineering. This process ensures high-quality, integrated, and analysis-ready data across systems—enabling reliable insights and informed decision-making through automated, scalable, and cloud-ready data pipelines.

Introduction to ETL

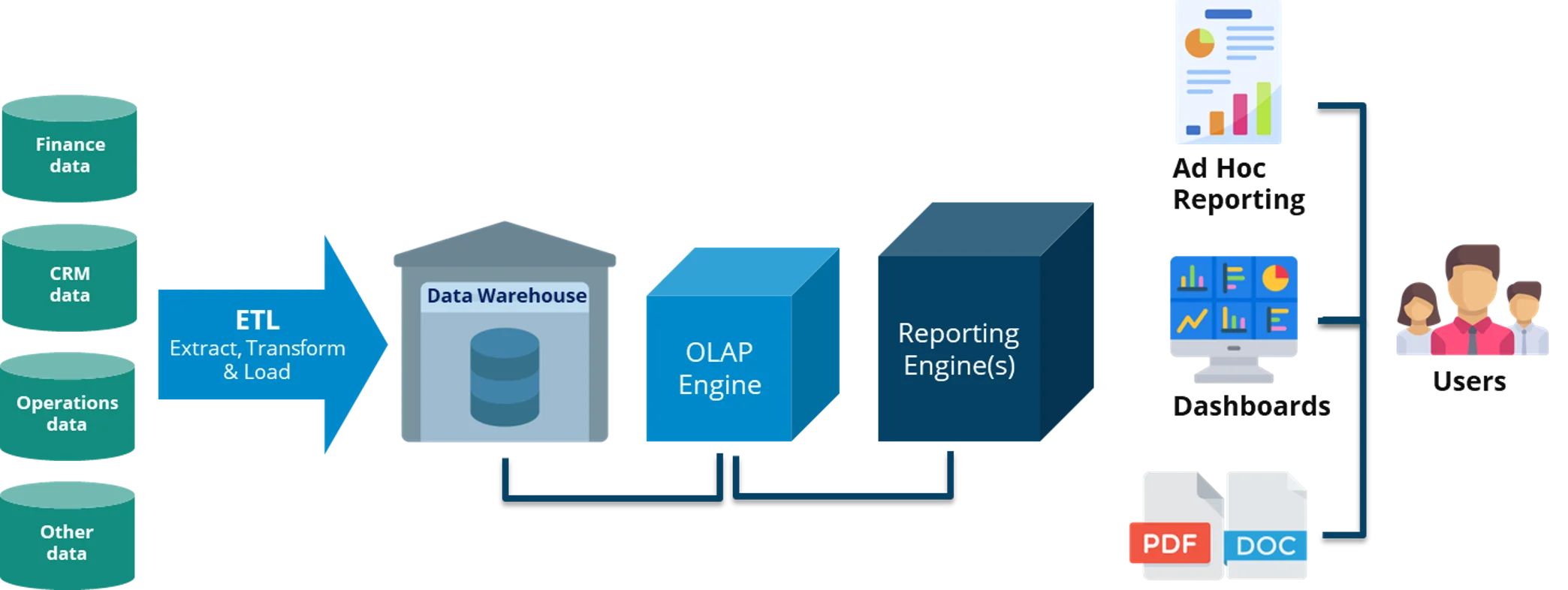

ETL, which stands for Extract, Transform, Load, is a fundamental process in data engineering and data management. It enables organizations to collect, clean, and consolidate data from multiple systems into a single, reliable source for analytics and business intelligence. ETL serves as the backbone of data pipelines, ensuring that information flows efficiently and accurately across enterprise systems.

The Importance of ETL in Data Engineering

ETL is the cornerstone of modern data engineering, enabling seamless data integration across complex enterprise ecosystems.

- Extraction ensures data is gathered from all relevant systems, from transactional databases to IoT sensors and cloud applications.

- Transformation ensures accuracy, consistency, and usability by cleaning and aligning data to organizational standards.

- Loading centralizes the processed data in a high-performance data repository, ensuring quick access for business users and analysts.

In today’s era of big data and AI-driven analytics, ETL workflows play a pivotal role in ensuring that data remains accurate, timely, and actionable. Automated ETL tools also help scale data operations, reduce manual errors, and enhance compliance with data governance policies.

Ultimately, a well-architected ETL process empowers organizations to make informed, data-driven decisions with confidence.

ETL Tools and Technologies

The ETL ecosystem has evolved rapidly, offering both traditional and modern solutions that support data pipeline automation and orchestration. Key categories include:

- ETL Tools: Talend, Informatica, and Apache NiFi provide comprehensive ETL workflows for enterprise data management.

- Data Integration Platforms: Microsoft SSIS and Oracle Data Integrator (ODI) are widely used for structured data transformation.

- Cloud-Based ETL Services: AWS Glue, Azure Data Factory, and Google Cloud Dataflow simplify data pipeline automation in the cloud.

- Programming Frameworks: Python, SQL, and libraries like Pandas or PySpark enable developers to build custom, flexible ETL workflows.

The adoption of cloud-native ETL tools continues to rise, driven by scalability, lower operational costs, and integration with data lakehouse architectures.

Modern Variants of ETL

As data volumes and velocity increase, new paradigms of ETL have emerged to meet the needs of real-time analytics and cloud computing.

- ELT (Extract, Load, Transform): Here, data is loaded first into the target system (such as Snowflake or BigQuery) and transformed later. ELT leverages the power of modern cloud data warehouses to handle large-scale transformations efficiently.

- Streaming ETL: Uses frameworks like Apache Kafka and Apache Flink to process real-time data streams, enabling instant insights for dynamic business operations.

These modern ETL approaches are essential in supporting AI, IoT, and predictive analytics workflows that require near-instant data processing.

Example: How an Organization Can Use ETL

Consider a retail company that operates both online and in physical stores and wants to analyze customer purchasing behavior.

Extract

The company collects data from:

- Point of Sale (POS) systems

- E-commerce platforms

- CRM systems

- Supply chain and logistics tools

- Marketing and social media platforms

All this data is extracted into a centralized staging area, ensuring completeness and synchronization across multiple channels.

Transform

During transformation, the company performs:

- Data cleaning to eliminate duplicates and errors.

- Standardization of formats (dates, currencies, IDs).

- Aggregation to derive metrics like sales per store or region.

- Enrichment by combining online and offline customer data.

These processes ensure consistent, accurate, and business-ready datasets for analysis.

Load

The transformed data is loaded into a cloud data warehouse, such as Amazon Redshift or Google BigQuery, for fast querying and dashboard generation.

Analysis and Utilization

Using BI tools like Tableau, Power BI, or Looker, the company can identify:

- Top-selling products and optimize inventory.

- Customer segments for targeted marketing.

- Campaign effectiveness across digital and physical channels.

- Supply chain performance for better operational efficiency.

This example demonstrates how ETL empowers businesses to unify data, gain actionable insights, and drive intelligent decision-making across all departments.

Knowledge - Certification - Community